Release notes

We released v0.1 of the Digital Assessment Maturity Model in March 2023, and spent the rest of the month talking to people from the sector and refining our initial work. This blog post lays out the version 0.2 of the model and outlines how it may be used.

Current assessment landscape

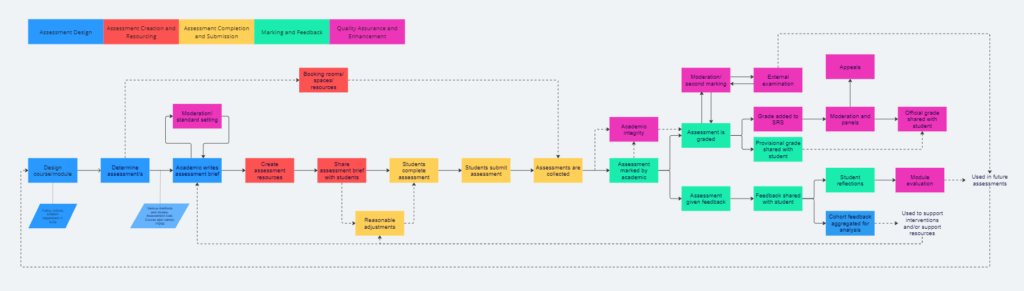

Assessment processes are complex [Citation needed]. Different universities do things differently and use differing terminology, however, the general process is somewhat similar. Our first step in developing a maturity model was to attempt to define the assessment process, shown below:

Note, there will be considerable institutional nuance, but the process chart aims to capture most of the activities that take place to ensure assessments run effectively.

Our second step was then to identify and define areas of activity within the assessment process. We have called these:

- Assessment design

- Assessment creation and resourcing

- Assessment completion and submission

- Marking and feedback

- Quality assurance and enhancement

These were chosen because they often require the work of different groups of people within the institution. For example, timetabling and estates teams may only focus on assessment creation and resourcing activities in relation to scheduling on-campus digital exams. It also allows us to potentially tag relevant resources in the future.

We are aware that this is an incredibly simplified process map compared to sector practice, and that each of the five activity areas is inextricably linked to each other – even if that is not immediately apparent from the process map.

Growing digital assessment

Digital assessment has increased in UK Higher Education over the last five years, driven by underlying strategy and greatly accelerated due to the COVID-19 pandemic. Universities and Colleges may have found themselves un- or under-prepared for a mass move to online exams, for example, and innovation practice happened at pace.

In these circumstances, enhancements to digital assessment may not have occurred evenly across all aspects of the assessment process. You may have concentrated efforts on creation and resourcing, or completion and submission, as these are the most visible to students, and considered digitising the quality assurance and enhancement element less so.

It is worth noting that while even enhancements across the five activity areas is not entirely necessary, as you progress through the digitisation of assessments, the links between stages can cause bottlenecks in your overall processes where they are at different points in their maturity journey.

Creating the maturity model

We started from the point that accessibility is something that is baked-in to all aspects of the assessment process, and that digital assessment meets all the legal and moral requirements currently in place. While this assumption may be fair, where this is not the case, accessibility should be approached before any other changes as per this maturity model.

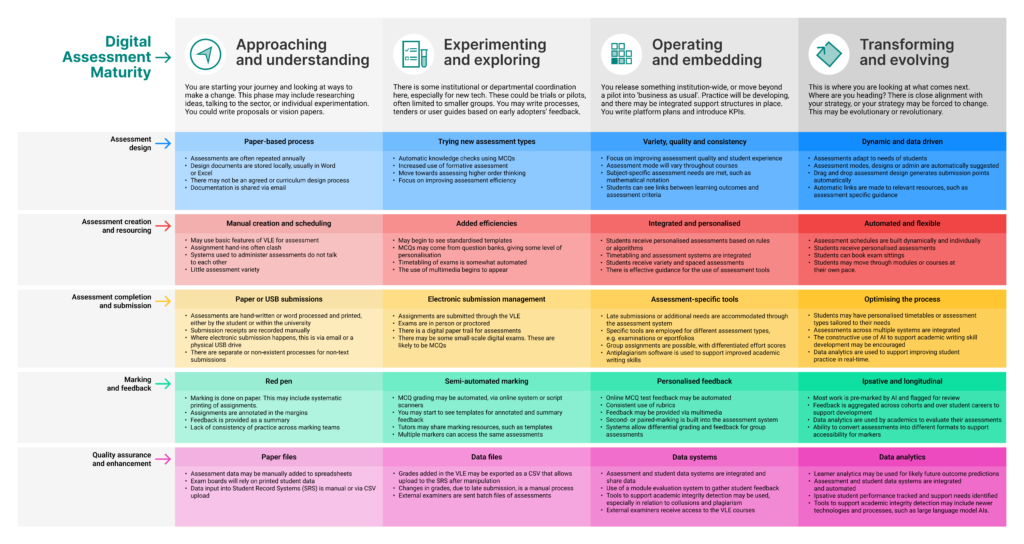

We have split the maturity model into four stages, with each stage denoting a significant shift in what you may observe. The stages are:

- Approaching and understanding,

- Experimenting and exploring,

- Operating and embedding,

- Transforming and evolving.

The progression from one stage to the next is cumulative; each stage builds from the one before. Within each stage, we have attempted to identify a non-exhaustive list of activities from across the wide spectrum of assessment practice as examples of what you might see at that stage.

The model is not intended to be a pathway you progress along, but rather a self-assessment checkpoint and to give you suggestions for the future of digital assessment in your institution. For many institutions, moving along the Digital Assessment Maturity Model may not be appropriate or favourable. Indeed, achieving ‘transforming and evolving’ may not be compatible with your internal practices or strategies.

Please don’t use this as a checklist to determine your stage; use it as a holistic tool to give a potential direction of travel. There will be some relation between your institutional assessment strategy, however, you should ensure an alignment between your strategy and practices over this model. The most value may come from comparing the maturity stages across all five areas of activity of the assessment process, especially if there are wide discrepancies, and to help update and renew institutional strategies.

Remember that Jisc has a range of experts and experience in supporting the development of digital assessment, purchasing frameworks and services, so please reach out to your Relationship Manager for more information.

Considerations and constraints

We have purposely avoided assessment methods and methodologies, as they are often subjective and pedagogically-driven (don’t worry, we are looking at that elsewhere in Jisc). We have tried to focus on the digital assessment process and the underlying technologies as enablers.

Introducing the model v0.2

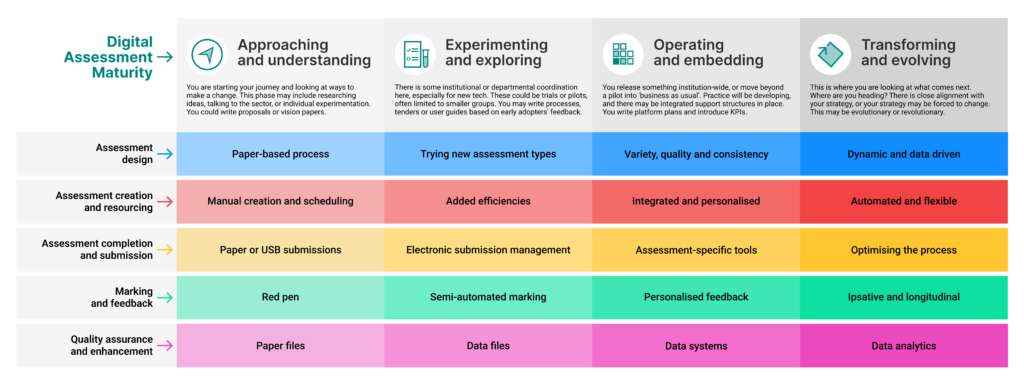

We have structured the Digital Assessment Maturity Model so you can scan across each area of activity of the assessment process and get a quick understanding of the differences between each stage of maturity. Each element has a summary title that gives a very basic overview, followed by some examples of what you might see.

In addition, we have created an abridged version.

Key changes

Based on sector feedback, we made a number of notable changes to the model.

The most obvious change from v0.1 is the move away from a graph to a more tabular representation. While it is anticipated that institutions will move through the model from left-to-right, the graph gave the appearance that ‘rightmost was better’. Some institutions will not find that to be the case.

The second biggest change was reduction of the model from five to four stages through the amalgamation of ‘operational’ and ‘embedding’. We also took the opportunity to tweak some of terminology and provide more context for what each stage means. Although this does take us away from existing Jisc maturity model nomenclature, we felt it made the model more relevant and usable.

The third biggest change is the restructuring of information to provide a better coverage of activities and to layer content detail. While we previously had the model and blog posts for two levels of detail, we now have summary headline, what you might see, and the blog posts. This should ensure the model is useful as an in-depth guide and as an ‘at-a-glance’ tool. This may necessitate the rewriting of the blog posts, but that was outside the scope of the current work.

Further changes were made to :

- ‘Quality management’ was changed to ‘quality assurance and enhancement’ to better match sector terminology

- The assessment process was added, and colour-coded against the model for ease of reference

- Additional emphasis that the examples in each stage are indicative of what you might see, rather than a checklist of things to be achieved.

- Dropping ‘phases’ in favour of ‘areas of activity’ when describing the assessment process.

- With a view to adding interactivity in the future, we created two versions of the model, one with ‘what you might see’ examples, and one without.

Next steps

Working alongside colleagues from Jisc and the sector, we will be further refining the model, particularly the terminology and examples at each stage. We especially hope to engage with colleagues in quality enhancement, timetabling and estates to assist with these refinements.

As the blog posts are largely redundant now that a lot more information has been moved into the model, we intend to rewrite the accompanying blog posts. We may repurpose them to provide additional context or next-steps.

If you are interested in being part of this review, please contact us. If you have any comments or questions, please add them below.

Jisc’s Summer of Student Innovation competitions are an opportunity for students to have an impact on life and study in work based learning providers, colleges and universities across the UK.

Jisc’s Summer of Student Innovation competitions are an opportunity for students to have an impact on life and study in work based learning providers, colleges and universities across the UK. At the end of May, Jisc invited Dan Derricott and I to attend an innovation workshop at the University of Birmingham. In University of Lincoln student engagement spirit, we brought along a University of Lincoln student, Sam Biggs (

At the end of May, Jisc invited Dan Derricott and I to attend an innovation workshop at the University of Birmingham. In University of Lincoln student engagement spirit, we brought along a University of Lincoln student, Sam Biggs ( A particular interesting topic was the removal of structure from education. It was proposed that Higher Education should move away from certainty and agreement, and operate on the edge of chaos. Peter Reed from University of Liverpool discusses these ideas in more detail

A particular interesting topic was the removal of structure from education. It was proposed that Higher Education should move away from certainty and agreement, and operate on the edge of chaos. Peter Reed from University of Liverpool discusses these ideas in more detail